The Perils of Automation: When Your Car Decides You Need a Shower

Bill Church

February 3, 2025

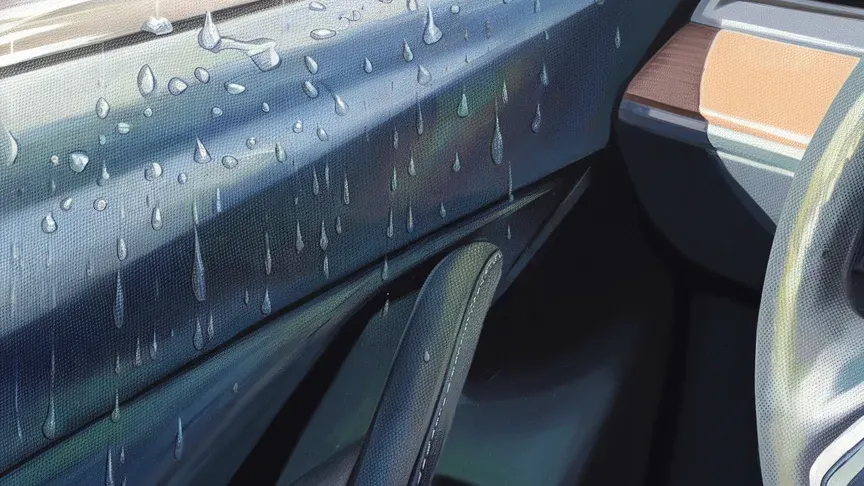

It was 9:00 AM on a cool (well, for Florida standards) January morning when I learned an important lesson about automation, courtesy of our "helpful" car. Backing out of our driveway in the dewy conditions, I rolled down my window to get a better view. That's when our car's automated systems decided to demonstrate their prowess. The rain sensor, detecting moisture on the windshield, triggered the wipers without warning. Instantly, a sheet of morning dew was efficiently redirected... straight through my open window and onto my lap.

Sitting there in my now-soaked shorts, I couldn't help but reflect on how this minor inconvenience mirrors the challenges we face with automation in our technical systems. Like my car's well-intentioned but poorly-timed wiper activation, automated systems in our technology stacks can sometimes create unexpected consequences when different components interact in ways we didn't anticipate.

This isn't just about wet shorts; it's about understanding the nuances of automation in complex systems. In my car, two perfectly reasonable automated features (window control and rain-sensing) created an unexpected interaction. Our technical systems see similar patterns: automated CI/CD pipelines interact with security scanning tools, automated scaling systems respond to monitoring alerts, or AI models make decisions that affect downstream automated processes.

These considerations become even more critical as we enter the age of Agentic AI, where artificial intelligence systems are increasingly empowered to take autonomous actions based on their understanding of the environment. When an AI agent can initiate actions (whether deploying code, adjusting system configurations, or responding to security threats), the potential for unexpected interactions multiplies exponentially. It's one thing when automated systems follow predetermined rules; it's quite another when they make complex, context-dependent decisions that could cascade through your entire technology stack.

Key Lessons Beyond Automotive Mishaps

Context Matters

My car's rain sensor did its job perfectly—it detected water and activated the wipers. What it lacked was the context of the window being down. In our technical systems, this ensures our automated processes have sufficient contextual awareness before taking action. An automated security response system, for instance, shouldn't just shut down services when it detects anomalies—it needs to understand the broader operational context. This becomes especially crucial with AI agents, who need to understand their immediate tasks and the wider system implications of their actions.

Integration Points Deserve Special Attention

The intersection of two automated systems (in my case, window control and wiper activation) created an unexpected outcome. In technology, we see this at the boundaries between automated systems: when our automated backup system meets our data retention policies or our auto-scaling meets our budget controls. These integration points become even more complex with AI agents, as each agent might operate with different objectives and constraints.

User Experience Shouldn't Be Sacrificed

Getting unexpectedly sprayed with water isn't a great user experience, even if the system technically works as designed. Similarly, our automated systems should enhance, not detract from, the user experience, whether those users are our customers or our development teams. Maintaining a positive user experience becomes more important and challenging as we add AI agents to the mix.

Building Safer Automated Systems

1. Design for Interaction

Consider how automated systems will interact with each other and with human operators. Think about the entire ecosystem, not just individual components. This is particularly important when introducing AI agents, as their actions may be less predictable than traditional rule-based automation.

2. Build in Appropriate Delays and Confirmations

Sometimes, a brief pause to allow human intervention can prevent unwanted outcomes. Not everything needs to happen at machine speed. I've implemented this principle with release automation tools in my development work. For example, in my projects using release-please, I must manually merge the changes into the main branch before any build is created and published. This extra step ensures I have one final opportunity to review everything before changes become a permanent part of the release. It's like having a "Are you sure?" prompt for your entire deployment process.

3. Establish a Single Source of Truth

Like having a conductor in an orchestra, you might have beautiful individual performances but chaos when everyone plays together. In automated systems, having a single, authoritative source of truth for your environment's state is crucial. Every automated process should reference and update this single source, ensuring all changes happen in lock-step. This prevents the kind of conflicting actions that might occur when different systems operate on outdated or inconsistent information, much like how my car's rain sensor and window control systems clearly weren't comparing notes before taking action.

This becomes particularly critical when working with AI agents, which may each maintain their understanding of the system state. Without a single source of truth, different agents might work at cross-purposes, each operating on their own potentially outdated or incomplete worldview.

4. Implement Robust Observability

Clear visibility into what automated systems are doing—and why—helps prevent and troubleshoot issues. Logging and monitoring aren't just nice-to-haves; they're essential safety features. With AI agents, this observability needs to extend beyond tracking actions to understanding the decision-making process that led to those actions.

5. Create Override Mechanisms

Please make sure humans can easily intervene when automation isn't helping. The ability to quickly disable or modify automated behaviors can prevent minor issues from becoming big problems. This becomes particularly crucial with AI agents, where the ability to countermand potentially harmful decisions quickly could be the difference between a minor hiccup and a major incident.

Conclusion

Ultimately, automation should make our lives easier, not leave us sitting in wet shorts questioning our life choices. As we continue to automate more aspects of our technical systems, and mainly as we introduce more autonomous AI agents into our technology stacks, let's remember that the goal isn't just to automate everything possible—it's to automate thoughtfully, with careful consideration of context, integration points, and user experience.

Now, if you'll excuse me, I need to change into some dry clothes. At least my car's automatic climate control is working perfectly to prevent me from catching a chill!